On December 18 2019, we were invited to do a public intervention at Stereolux on the theme of "tricking the digital." Under fictional identities, we attempted to give shape to a possible future for data work and data citizenship (which are quite intertwined here). It all took the form of case studies, supplied with speculative artifacts and services granting the presentation some degree of plausibility, while attempting (albeit in a friendly way) to lure the audience into accepting the fiction as fact—even the Q&A was conducted in diegesis, before it was all revealed.

This is a transcript of our talk, illustrated with the artifacts that gave life to our worldbuilding, and slightly altered in the text for reading.

Nantes, Dec 18 2019.

“How many of you would agree to hand your personal data to a company, in return for nothing at all?” Amused silence. “How many are registered to a loyalty program of any kind — Airmiles, supermarket membership, Amazon prime…?” Awkwardly, as if shrouded in sudden guilt, all hands rise. “Why subscribe to the very thing you so categorically reject,” we ask. “Out of convenience? For discounts? These benefits,” we argue, “are pure fiction — a self-fulfilling prophecy fueled by our collective compliance.”

Towards the Ethical Monetization of Data

The conference is held by Korantin Spiegel and Azhar Abassi, two fictional personas respectively assuming the roles of expert in digital markets and project lead of EXCEED — the new, also fictional, European Executive Commission for the Ethical Exchange of Data. Its title: “Euromesh, Dataculture & Private Property — towards the Ethical Monetisation of Data.” Coordinated by Design Friction, and taking place at Stereolux, Nantes, it concludes a day of talks and workshops entitled “Tricking the Digital,” which, as one could expect would lead to passionate debate on the topics of privacy, protection, and the politics of data. Throughout the day, and even more so at the start of this lecture, we questioned the reasons behind our efforts to protect our privacy — who, what from? A dictatorial government? Evil corporations? Do we fear malevolent, big-brothery surveillance, or simply negligence in the absence of an appropriate legislative framework — as seen with the example of jogging app Strava which, during a marketing coup, revealed the running paths of users around the world and unwittingly showed the location of secret military bases? Are we trying to escape the wrath of hackers, or do we revere them for their humor and revolutionary actions? The Protection of privacy, we say, is a fundamental right; it cannot however be the sole driving force of progress, and we must avoid sinking into a form of digital conservatism. Instead, digital activists must make use of their knowledge and skills to offer realistic political alternatives.

Data for Social Good

With data steadily on its way to becoming digital gold, now seems like a critical time to assess its current implications and future trajectory. Could we, for instance, veer away from capitalist inclinations, and reroute the data economy toward a new social model? And while at it, cherry the cake with new rights for digital citizens? As hands go down, we move onto a less trivial thought experiment. Who here would give their medical data, every kind, down to DNA sequencing, if it could save their own life? Hands are back up. The life of a loved one? No one budges. The life of someone you don’t know? Hesitation. Who would give their medical data to advance medical research? The audience is divided. A 2016 article published in Wired and titled with a quote from molecular and computational biology specialist Eric Schadt claims that The Cure for Cancer is Data — Mountains of Data. The text goes on to describe how sequencing DNA from 10 million people would help build datasets complete enough for AI to solve the issue for good. Although technocentric, such a prospect sparks hopes of lower mortality and reinvigorated economies — in 2015 alone, cancer had a worldwide impact of 895 billion US dollars. The spark is however not without concerns about eugenics and more discrimination, especially when speculating on new avenues for inequality such as genetic predispositions, or access to health insurance. Change of slide: “Data and Environment”, or how can the harvesting of ecological and consumption-related data make systems more adaptable and reactive, so that only resources that are truly needed would be produced — the best waste is the one that isn’t produced, after all. We show GAIAI, part of a project we ran with the Potsdam Institute for Advanced Sustainability Studies, as a presumably successful example of ‘algorithmic environmental governance,’ and move on. “Data vs Culture:” can data about how we consume culture or entertainment in particular truly inform the production of tailored content? As opposed to plain viewing metrics which depend more on marketing budgets than a true measure of content quality? How customizable can this become? We leave the question up in the air. “Data vs Government.” Here, we discuss personal behavior as a political act and, with the example of the political cryptoparty developed within the bounds of a design fiction workshop in Mexico City, we look at how the machine and its statistical governance can be a better ruler than the usual, corrupt politicians. Can it really?

After this warm up, we finally unveil our case for a new data economy, portraying it as a realistic approach to a true universal basic income — a concept already shifting from the hand of the interventionist state to that of the free market, with the idea of “return on investment” replacing that of public good. Somewhere at the convergence between these two worlds lies the possibility of a monetary compensation based on the ethical exchange of data, overseen by a strong intergovernmental body. Three main challenges must be addressed in order to achieve this organization. They are

1

Access to truly free and open data, pushing digital citizens to become informed actors of the system rather than mere users.

2

A secure, inclusive, and widespread standard for datasets striving for fast and easy interoperability. And

3

High-quality data that is readable, useful, contextualized, labelled, complete, and has received full and unequivocal consent from their source, the citizen.

"Good news everyone," we interject, this kind of legislation on standards is exactly what the European Union does best, and so we claim to have come up with solutions to these exact needs, and put together a framework encompassing both their technical and legal aspects.

The result of this speculative EU-based initiative culminates in a new status, that of the ‘European Digital Citizen,’ the cornerstone of a new and powerful model aiming to act as an alternative to the American ultraliberal, market-driven data economy, and the authoritarian social scoring system made in China. It is designed to function, once fully-implemented, as a zero-sum economy generating wealth through a complex-in-depth-simple-on-the-surface system. To clarify, we pull up the example of a Rotterdam nightclub experiment built around an infrastructure harnessing electricity from the motion of dancers to power its lights.

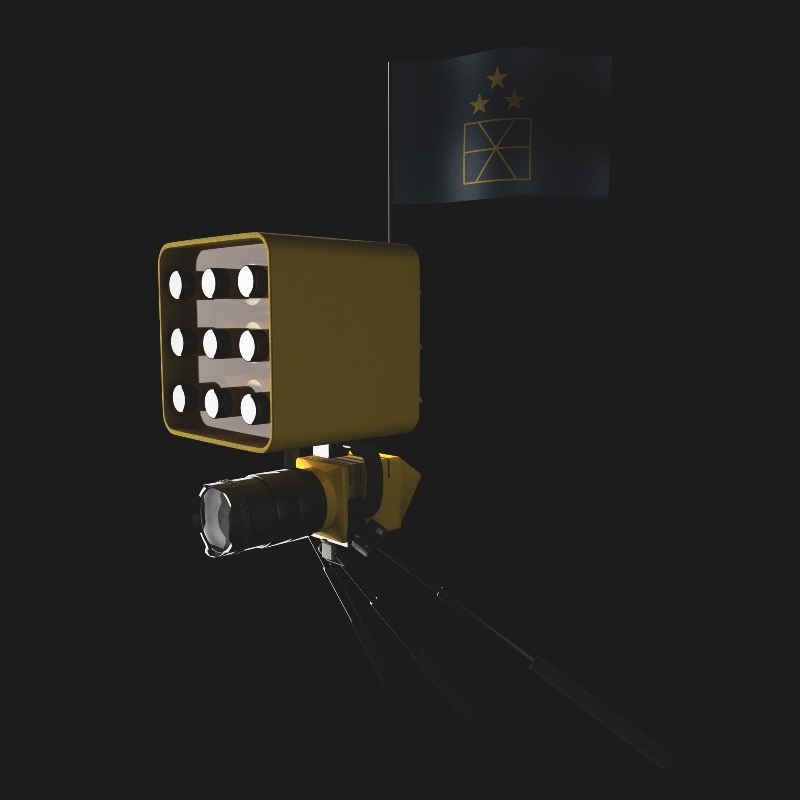

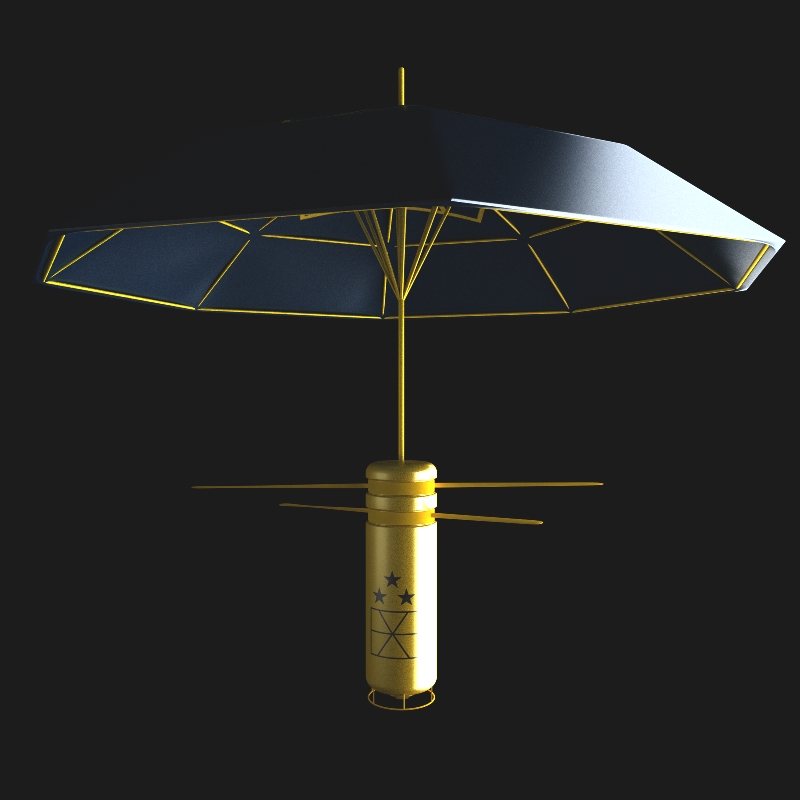

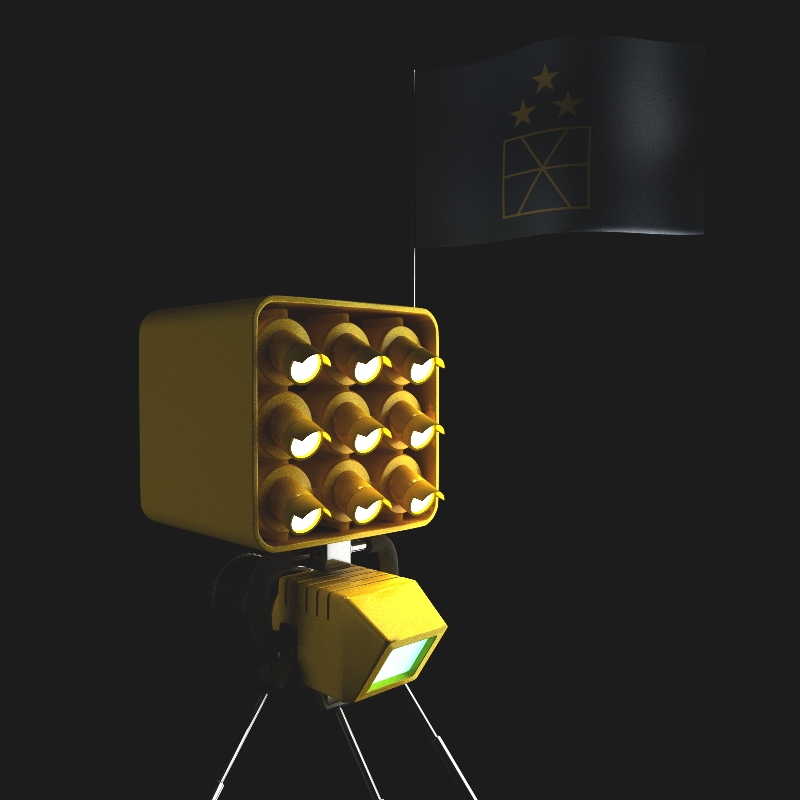

2017—2019: The Pilot Project

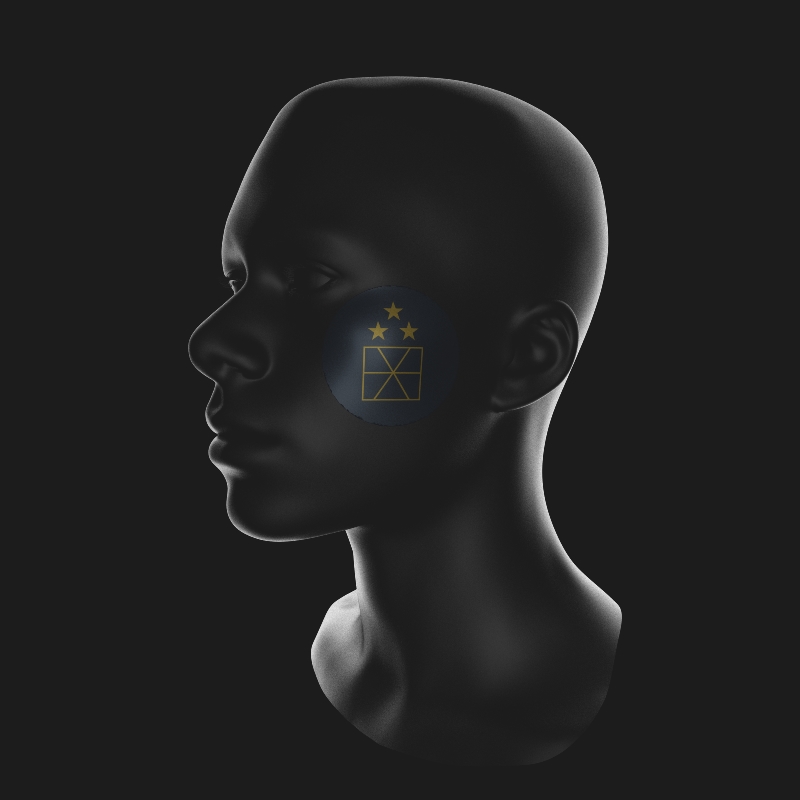

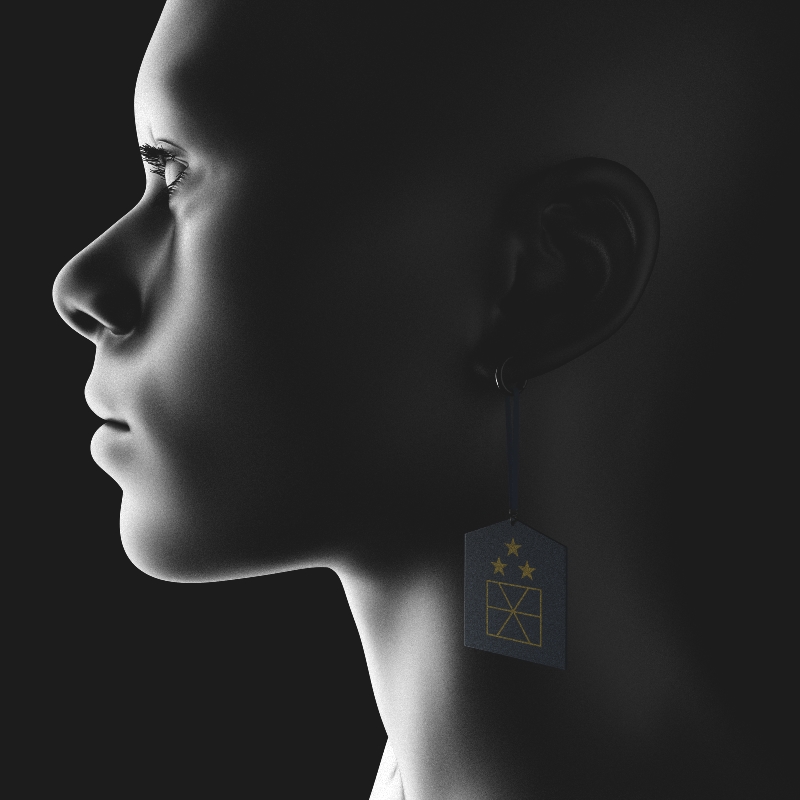

The show goes on with more of the nitty-gritty on how, for nearly two years, a pilot project set in Eindhoven (NL) in collaboration with the Technical University has been offering some 7500 residents of the city and its surroundings the possibility to take part in an early implementation. The experiment offers various benefits based on three basic levels of involvement. ‘Basic’ users, who give the minimum consent for taking part (DNA sequencing and collection of biometric, visual, geolocation, interaction, and consumption data), receive compensation from local partners: food baskets fulfilling basic needs, free local transportation, a standard energy package (200kWh/m2/year/person), privileged access to housing, and health insurance covering all medical needs as well as emergencies and paediatrics. Such digital citizens wear markers showing their affiliation to the EU-backed system. These facial wearables are in turn seen by infrared cameras and let supervision infrastructures quickly identify member-citizens while assessing their individual data collection permissions, turning a blind eye to non-members whose personal information cannot be collected. In addition, members are given a new “digital European citizen’s passport.” ‘Passive’ users go a step further by enabling additional data detection (environmental, psychological, social) without extra effort on their part, whereas ‘Actives,’ colloquially known as ‘Harvesters’ go out of their daily routine to accomplish special missions for collecting, checking, or labelling data, becoming de facto data workers in their own right. Precise and efficient data collection calls for precise and efficient devices. To this end, EXCEED’s advanced data arsenal has been entirely conceived by designer Roland-Geert Van Den Boerderij, aka ‘RGB,’ an illustrious (fictional) dutch designer known for his socially-engaged works in fashion at large and his “humanistic” stylistics, insuring such new everyday objects would also bring joy to their users. The more time is spent working on data, the more benefits can be received, spanning telecommunications, maintenance, leisure, culture, or even luxury services and items.

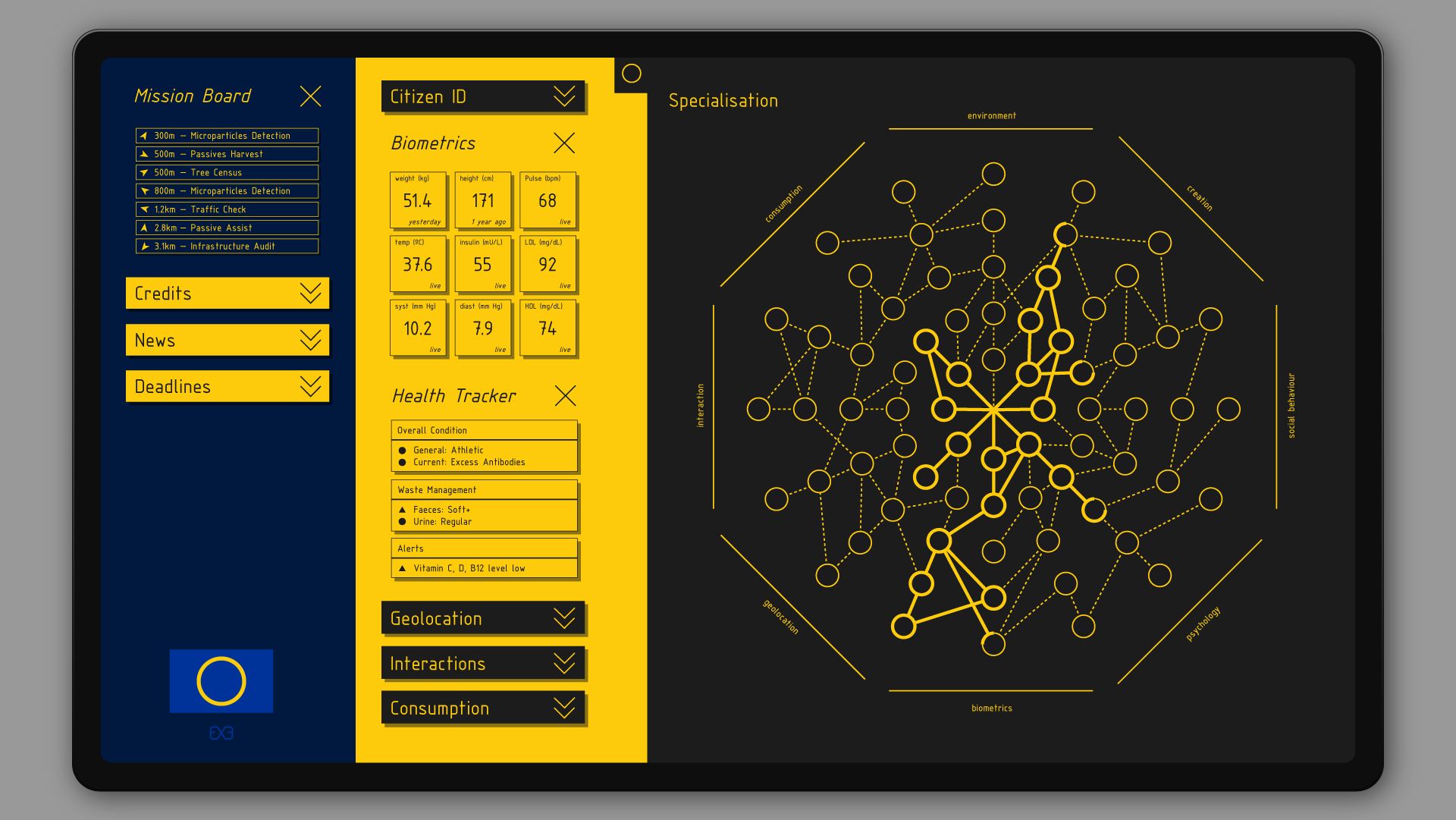

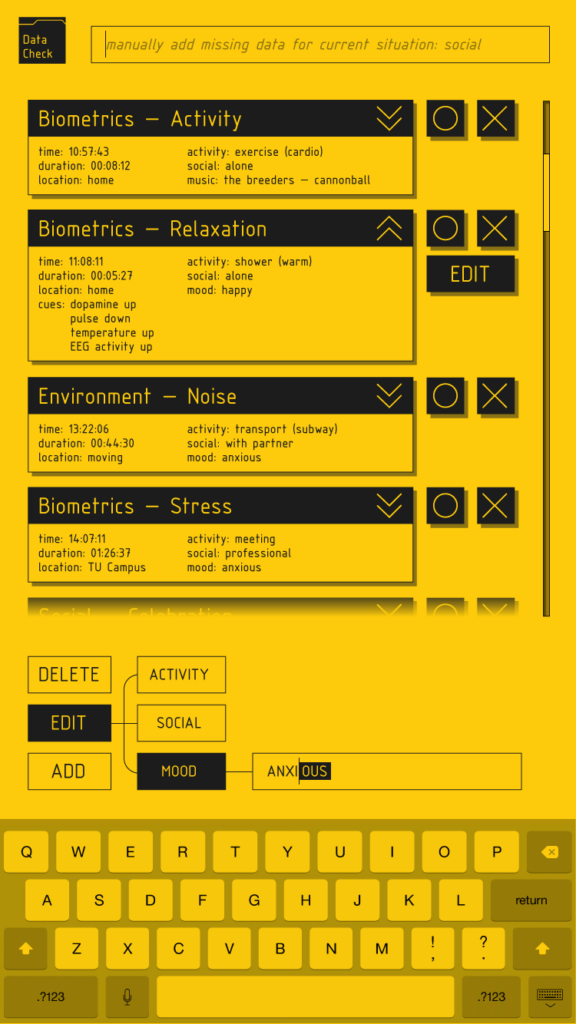

Aside from markers, all citizens invested in active data collection are required to make their presence loud and clear to the public. With this in mind, the other tools developed by studio RGB comprise mobile, human-operated infrared cameras (the system forbids the use of fully autonomous capture devices, and incentivizes a human-based approach to data collection), sports wearables, gaze-tracking head mounts, galvanic response and pulse oximetry wristbands, a DNA sampling kit, biopills enabling citizens to collect and communicate inner-body biometrics, and more. This system, although complex in its management, is accessible through a simple front-end offering citizens the possibility to progressively develop specializations through a skill tree, unlock access to new devices and missions, and to follow the system’s needs through a dedicated mission board. This dashboard provides them with a full overview of their capture devices and collected data, as well as complete and instant control over their various consent channels.

The Biggest Lie

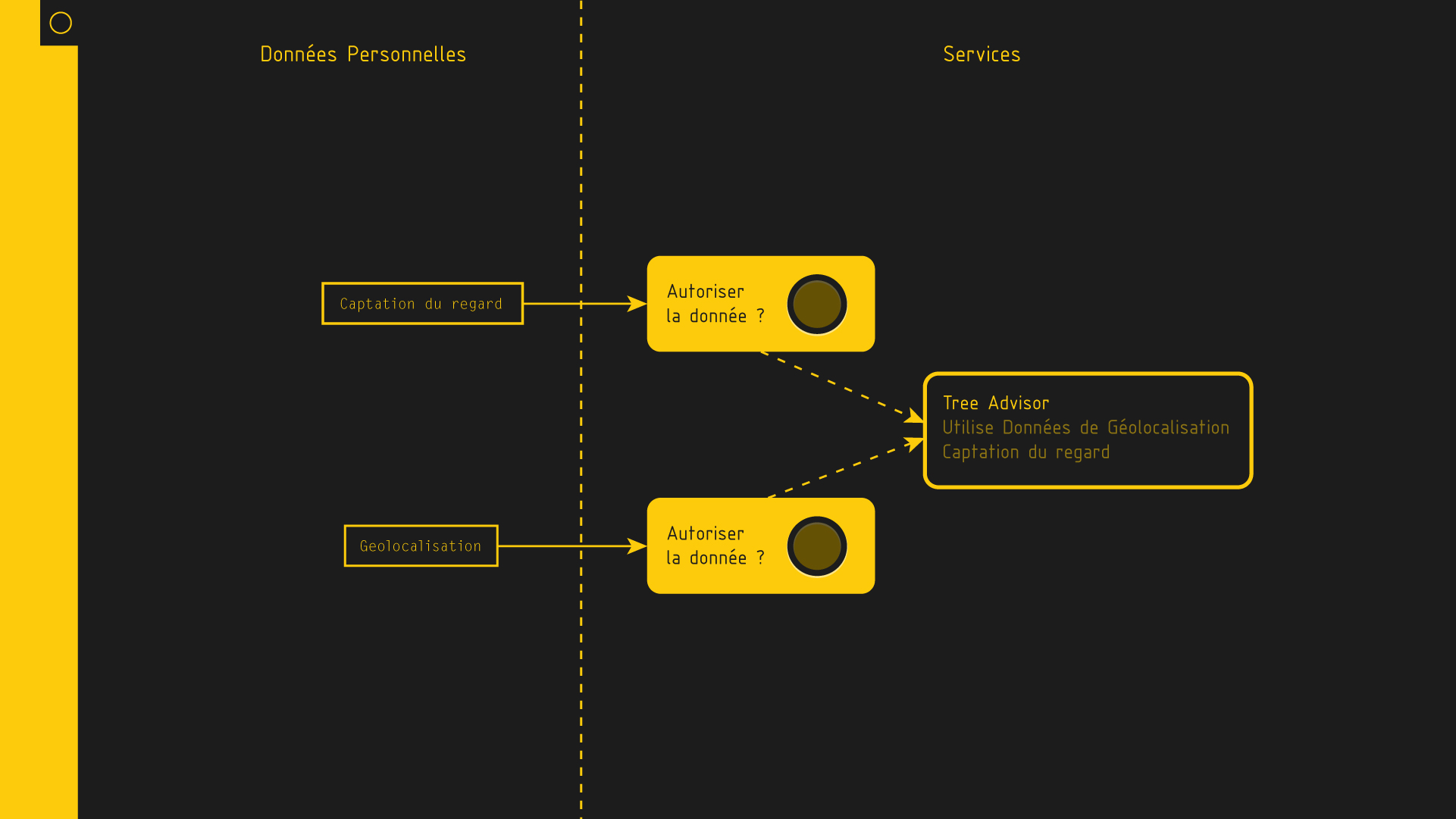

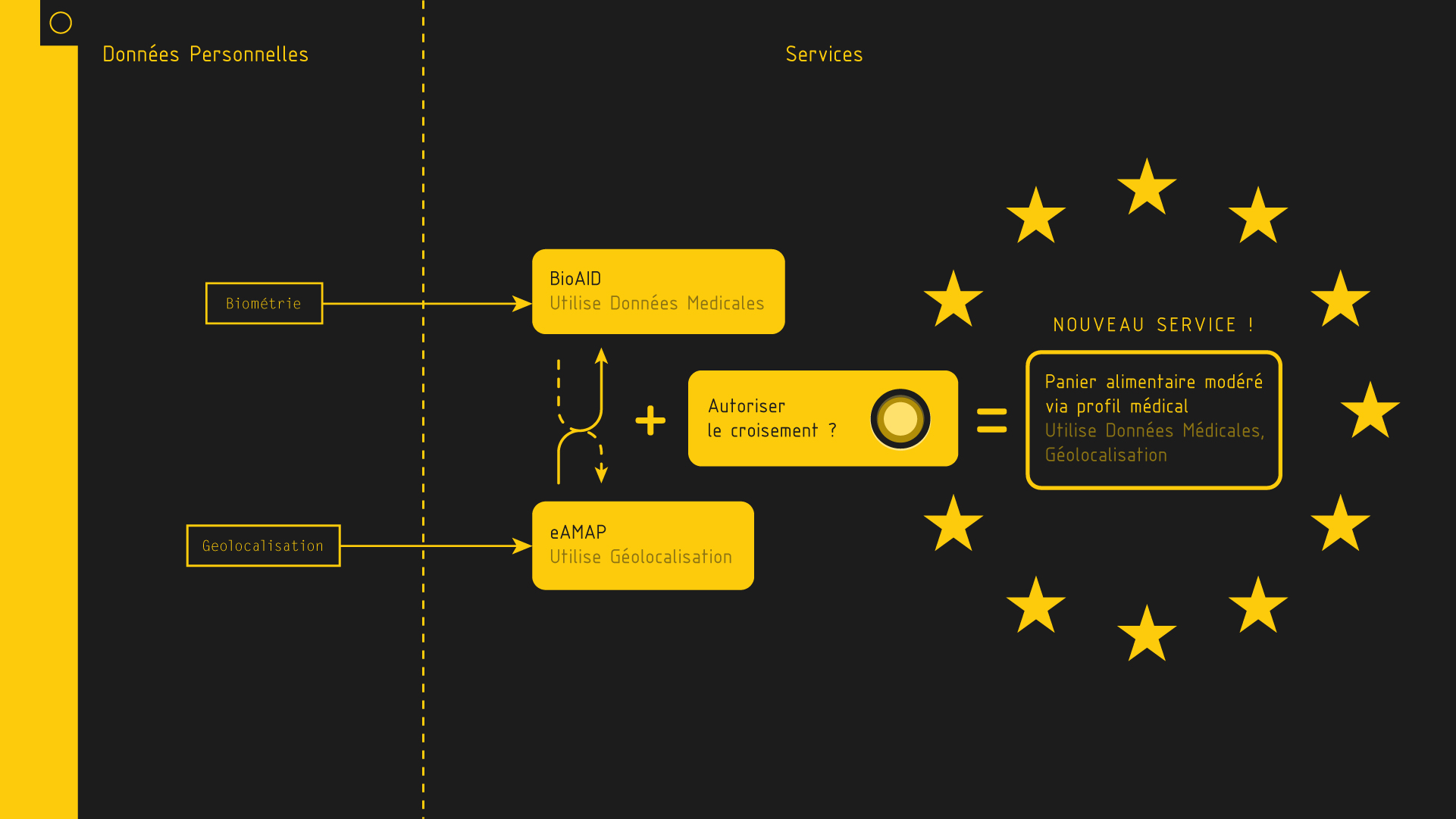

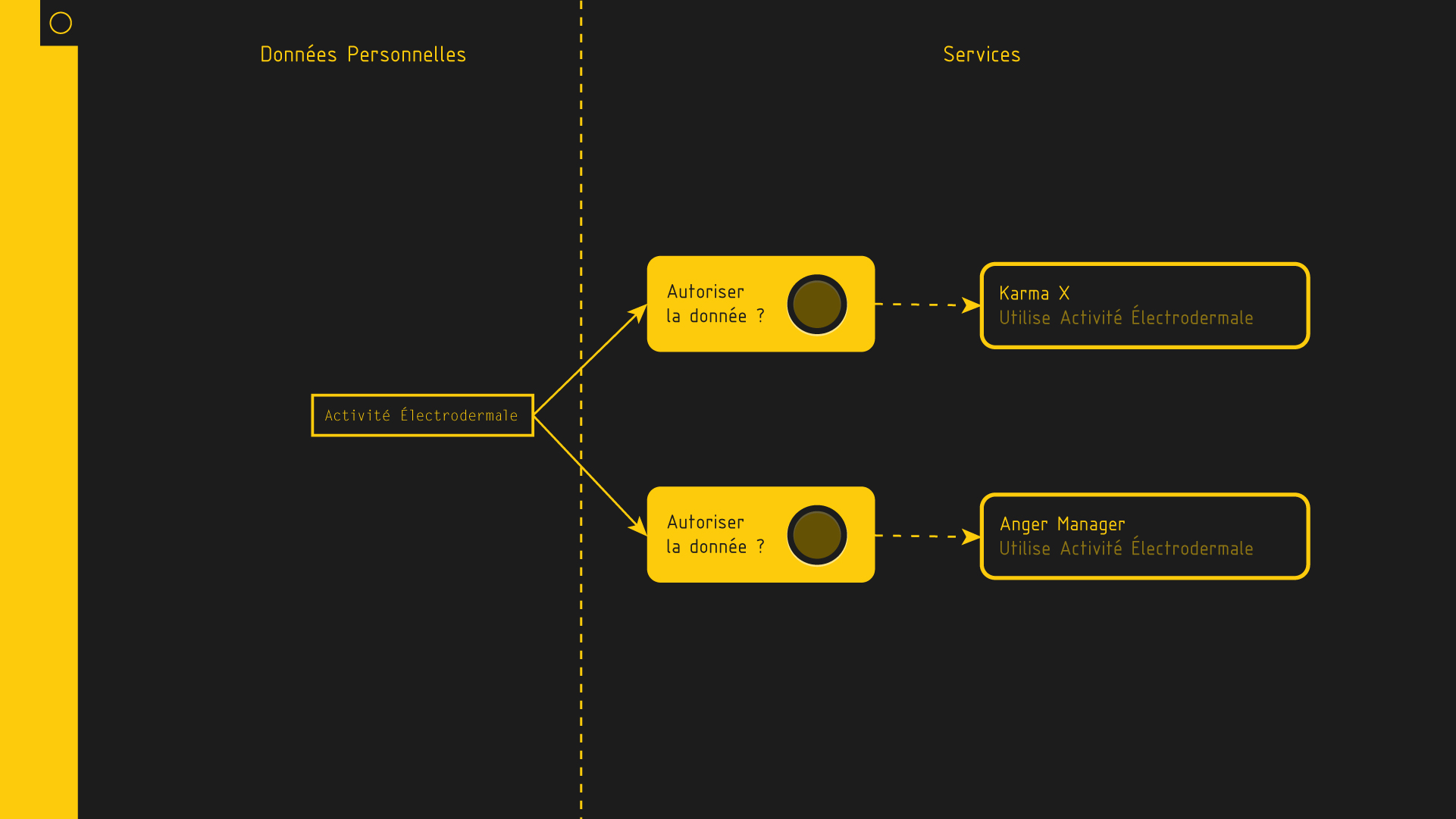

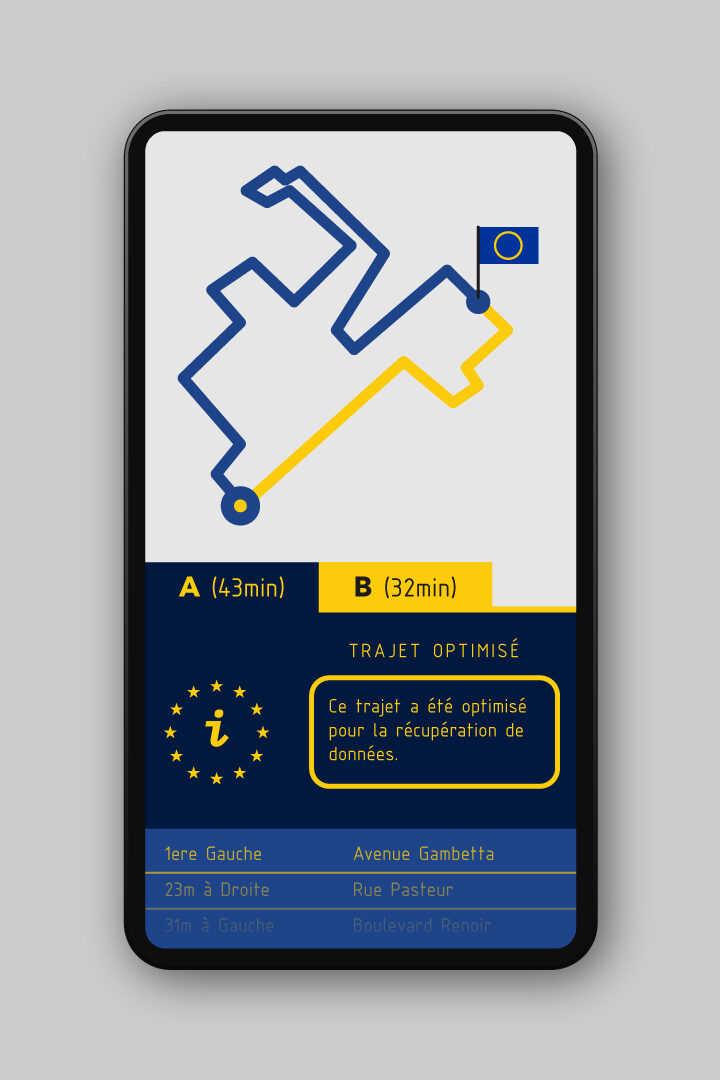

Ignore it, tick it, forget it: the biggest lie on the internet, we argue, fits in a single sentence — and we are all guilty. “I have read and agree to the terms and conditions.” On the next slide, we demonstrate how our system is designed so that consenting to data collection becomes an explicit and transparent act, and how it defies the status quo by simply moving away from “no = no” to “not yes = no.” As trivial as this distinction may appear to most, it is the very basis for any data-driven model that is respectful of its citizens, and that seeks their trust. And beyond data collection itself, consent must be granted for data processing as well as crossbreeding — we illustrate with three examples of what we call ‘composite consent.’ In the first example, the merging of two different types of data may be needed for a specific use: consent must be made explicit for each data type. In the second, a single data type can be used for different applications: user consent is required for each of them. In the third, where two, previously authorized applications may be merged to generate additional benefits: consent must be granted explicitly for their crossbreeding. An additional example shows a route planning interface offering citizens to pick a ‘data-reroute option’ that replaces the shortest proposed path with a longer, often more convoluted one, optimized for the gathering of important environmental data. If picked, the longer route is registered as ‘data work,’ and adds up to the citizen’s benefits.

Case studies

The following slides introduce five fictional citizens, accompanied by their digital portraits and diverse diegetic objects.

Park Bong-Chaⓔ

Park Bong-Chaⓔ, a Korean exchange student at the Philips High-Tech Campus, is our first case study. She passively records her social interactions through a clip-on module for glasses which captures emotional response and engagement in both herself and her interlocutors — provided they are digital citizens as well — and her sleep patterns at night through her ‘Oniri’ — an EEG & REM-tracking hat that lets her review and transmit her data in the morning. She also actively participates in data sanitizing missions, taking on tasks such as semantic labelling, cross-referencing, duplicates removal, and the correction of false positives through a dedicated application.

Fatima Van Houtenⓔ

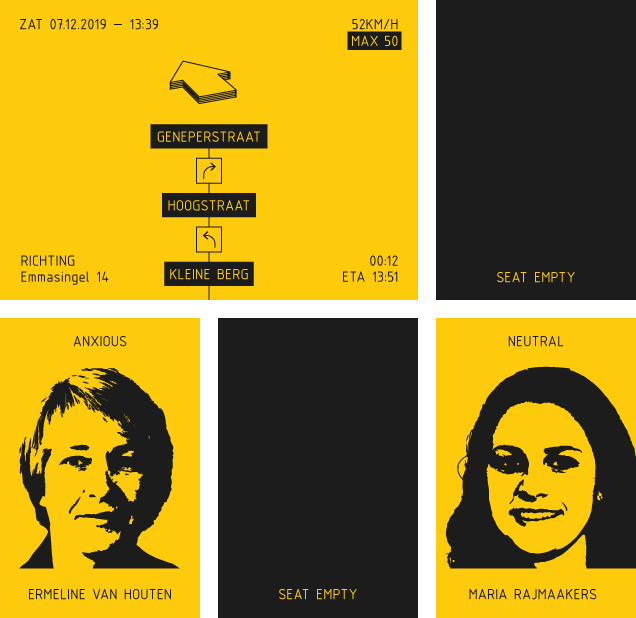

Fatima Van Houtenⓔ, works as an on-demand driver for companies and individuals alike. Extremely concerned about environmental issues, she bought an all-electric vehicle years ago and, upon signing up for the European Digital Citizen program, equipped it with P4 particle sensors. These, coupled with a 360 video feed and location tracking, put her in an ideal position to create a highly accurate and up-to-date map of atmospheric pollutants, traffic conditions, and road quality. In order to know the quality of the air she breathes, she also wears a neck ruff version of the P4 sensor. As part of the citizens-ride-free program, she has installed back-facing cameras in her car’s headrests, displaying real-time insights on her passengers’ emotional states, and allowing her to adapt her driving and conversation accordingly. Finally, she keeps her valuables in a connected document-holder, which sends out her live geolocation while keeping her belongings secure.

Lieselotte Weijⓔ

Lieselotte Weijⓔ is a competitive sportswoman. She trains every morning and is highly interested in the way her body acts during this routine. She has equipped herself with a biometric hypodermic implant no bigger than a two euro coin, which simply requires the painless insertion of a small pin to monitor lactic acid levels in her muscles. She has also recently started being active as a data counselor, intervening on suspected errors in data collection by arranging meetings with other digital citizens to clarify protocols, troubleshoot issues, and identify possible misuses — intentional or not. Doing this, she acts as a human interface between EXCEED and its users, and relays concerns as well as suggestions. One example of such a situation: Lieselotte once received notice of abnormal biometrics and, upon investigating, discovered that a lady had her cat wear sensors destined to her, supposedly out of “sheer curiosity.” Once the possibility of fraud ruled unlikely, this event prompted a side-debate on the potentials of data generated by animals, and whether it posed the risk that some might breed animals for the sole purpose of cashing in on such docile data workers.

Henk Schenkelaarsⓔ

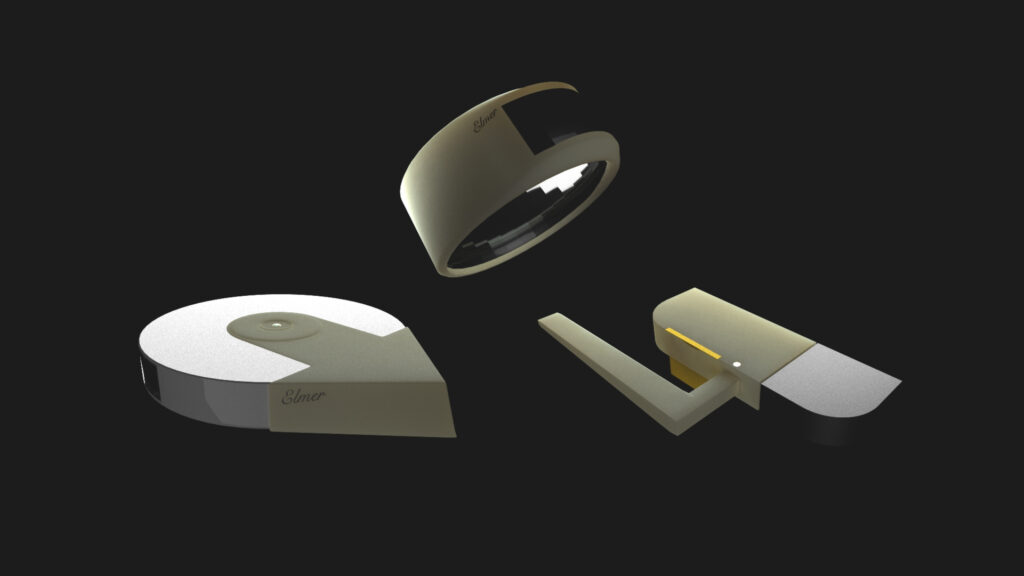

Our second case study is Henk Schenkelaarsⓔ, a retired widower living alone and in relative autonomy thanks to an ‘Elmer.’ Loaded with visual, infrared, audio, and pressure sensors, the autonomous vacuum cleaner doubles as a connected, voice-controlled assistant aiding with domestic tasks such as calling contacts, ordering supplies, or leading the way to the bathroom at night. It is in constant communication with Henk’s vitals-tracking bracelet, which can detect any health issue and immediately call for help. In such cases, it is also able to remotely disable the door’s smart-lock, and sound an alarm that will signal the emergency to Henk’s neighbors. The consistent data collection makes the Elmer not only an invaluable service, but also a free one.

Leendert-Jan Paapⓔ

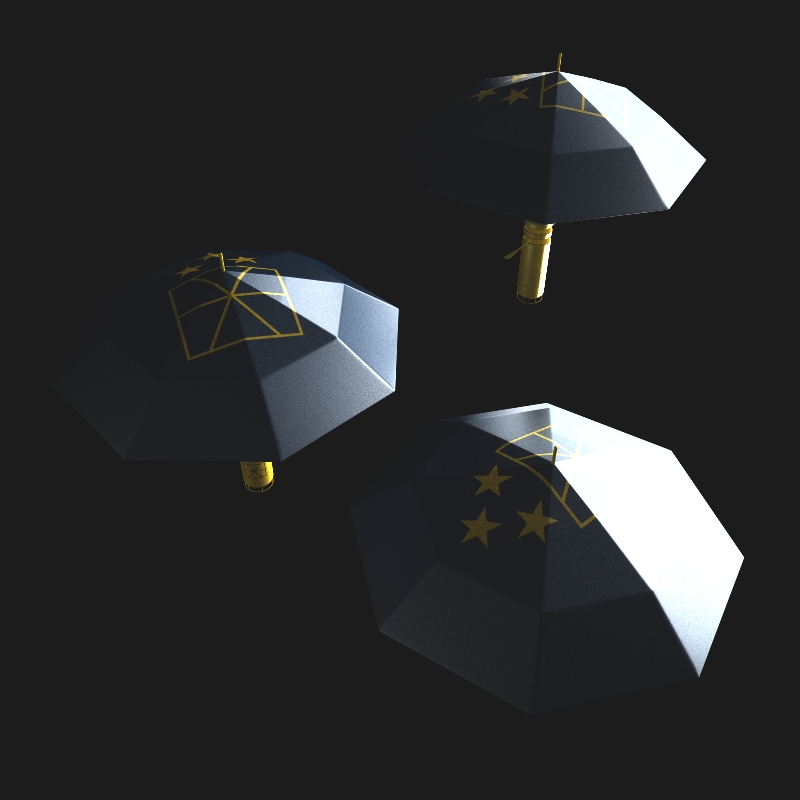

Leendert-Jan Paapⓔ is an active citizen — one could say a data professional. Unemployed at the time of opting in, he sees in his new status an opportunity to generate revenue. Although not in the form of currency, this helps him live comfortably, improving much upon his former struggle to make ends meet. At the start of the day, Leendert-Jan reviews the system’s needs on the mission board, and claims assignments for which he has the required skills, equipment, and time. Mostly, he picks those sending him to collect visual data through an array of manned cameras on tripods or hanging from RGB’s signature dronebrellas. Moving around town, he observes citizens, the environment, checks on infrastructures, and deploys his toolkit to provide valuable data about urban life. With time, he’s found himself becoming somewhat of an ambassador to the project — answering the questions of passersby, explaining the system and its core values. With such a considerable chunk of his day spent in public space, Leendert-Jan has already been witness to a few incidents, and no longer goes out without his first-aid kit.

About Privacy, Still

This model, although rooted more in mutualism than protectionism, isn’t blind to the issues of privacy and individual rights related to personal data. On the contrary, these are integrated at the very core of the system, with a personal trademark being provided for each citizen, protecting them in almost the same way corporate trademarks protect businesses by giving them complete control over their image, its visibility, its uses, as well as insurance against any unlawful appropriation of it. Private data is further protected by routing all transmissions through the Euromesh, a distributed ledger infrastructure akin to the ‘Hashmesh’ — an alternative to the blockchain rid of its iconic ‘proof-of-work’ (PoW) system to instead confirm transactions through principles of volumetric consensus and experience variability, thus lowering its energetic impact while increasing both resilience to attacks and transaction speed — developed by the team of Rajesh Laghari at IBRE. These two aspects (personal trademark and distributed ledger) are coupled with a complete ban on advertisement and rigorous principles of partner certification, which continuously enforce the duties and responsibilities of select third-parties from the private sector who take part in this economy. The protection of digital citizen data is ensured by a strict policy of EU-partner exclusiveness. The EU has an unrestricted right to crawl and probe private databases at all times, and if digital citizen data — may it be facial snapshots from CCTV cameras, biometric data, or basic details such as a phone number — appear on non-certified datasets, enormous fines are at stake for the careless collector, as well as the impossibility to ever apply for a certification.

Welcome to The Dark Side, or: the Limits of Consent

Before wrapping things up, we bring to the forefront equally speculative imperfections to taint our polished pitch — a covert way of getting the audience riled up, ready to let loose of their vitriol during the imminent in-diegesis Q&A. Of course, the ethical implications of such a system are numerous, and it would be arrogant on our part, as representatives of an EU initiative, to claim having thought everything through — as there doesn’t go a day without a new challenge. We go on with a bleak example of a member who recently passed away and, as a dedicated biometrics harvester, left us with a load of data pertaining to his unfortunate passing as well as the few hours after it, all punctuated by a big question mark on the limits of consent. Can this invaluable data be used for medical research? Should it be deleted immediately? Should we implement a system to stop data transmission at the moment of death, or should we rather include an option allowing citizens to become posthumous data donors the same way one can be an organ donor nowadays? Are there benefits for their heirs? In fact, questions such as these are not for us as system architects to answer. Rather, and by design, they are for the collective to raise and debate, which is where lies the last brick of our constructed system: collaborative politics. As is, whenever a new issue regarding the system is raised, it is posted to the digital citizens’ dashboard to benefit from their scrutiny, collect their opinions, and foster healthy, critical thinking — a bit like a debate platform, or the back-end of Wikipedia. The consent granting process — when citizens allow or deny third party access to their data — is also monitored in such a way. These are open tasks for whoever is part of the system, and willing to peek. But then, should questions regarding the system at its core be initiated and moderated by people at all? Or can we — and should we — steer towards a more automated form of moderation? Running much less on conscious opinions and considerably more on behavioral patterns, potentially eliminating suspicions of bias and corruption? Can we — and, again, should we — vote without voting?

On these questions, we end the lecture and give the audience the time to ask their own. From who develops the tools used to collect data — and the possibility for citizens to take part in their design — to the issue of one’s disconnection through hyper-connection, we discuss the many aspects relative to our relationship with data and digital modernity. Can this system be made so seamless that it liberates us from our dependence on the connected world? Or would it on the contrary engulf us whole, while accelerating the digital divide? —“When will you give the answer to this travesti?”, one person rightfully asks, having noticed that our presentation failed to respect the graphic standards of real EU-initiated projects. We make up some poor excuse of an excuse in order to keep the fiction alive just a little bit longer, and skip to a slide displaying a link to a webpage for enrolling in the second wave of the social experiment which, we explain, will launch in 2020 in several regions of Europe, including Loire-Atlantique where we are giving this talk. To those who follow the link, a single page reveals the fictional nature of the project, letting a few people in on the secret. We proceed with more of a taunt than a question about the bad publicity our system had presumably gotten after automatic doors failed to open for our registered citizens because of the "scary fines" should their data be captured by the doors’ sensors — something we’d planted in the audience. This is followed by a question about the risk for a large company like Philips to flout the rules and overtly abuse the wealth of personal data acquired through this system, which we assure is rendered impossible by the system’s watermarking and oversight of the data, and the severe penalties inflicted if such watermarked data was to be found on third party databases outside of our ecosystem. A few more questions and the curtain falls — None of this was true. We explain that, although most of the elements presented are fictional, the issues raised are only too real. Relocating these into an alternative reality, we continue, allows us to address core aspects from a different perspective. Making the hypothetical become real for an hour, we took our audience on a journey beyond the usual tropes of privacy and surveillance, navigating together the troubled waters of a project both desirable — in its socially-aware agenda, an attempt at a post-capitalist economy — and bleak — a form of incentivized data communism opening the gate to new forms of privileges without questioning the old. This elaborate reductio ad absurdum was designed to keep our audience on the fence, indecisive to what side of the story they would like to embrace, and therefore questioning the very premises of the issue.