After a first experiment labeled Version 0.9 a, the A P P A R E L project is back for a full-fledged experience of data-infused AR fashion.

::::::::::::::::

A bit of context

::::::::::::::::

A P P A R E L is, and has been an ongoing research in speculative fashion. It is based around the core idea that in a digital age, and a post-industrial economy, clothing wouldn’t have to be about the prefabricated identities we buy at H&M, Nike, or Calvin Klein. It would be about us, projecting who we are, how we’re feeling, or rather how we want to be spectated. In its most speculative form, this idea relies on the continuous adoption of means of overlaying reality with the digital realm — mobile devices, Google goggles, Microsoft’s holo-headsets, lense-based displays, retinal projection devices. Just as we elaborated on the question in N O R M A L 0 0 1, manufactured things might well be partly or fully made of pixels at a relatively close point in the future. We’ve already dematerialized a good chunk of our world, and almost all industrially made things transit through a digital stage at production. Seeing labels on products or the colors of shoes go the way of the encyclopedia is, in fact, hardly any speculation. So there. This is where A P P A R E L starts — what do we do with this strange, variable, and ever updatable matter we know to populate screens? And more to the point: how could this affect the way we exhibit ourselves in public? “Let’s replicate the things we’re already wearing, but then in 3D” — no.

::::::::::::::

Technicalities

::::::::::::::

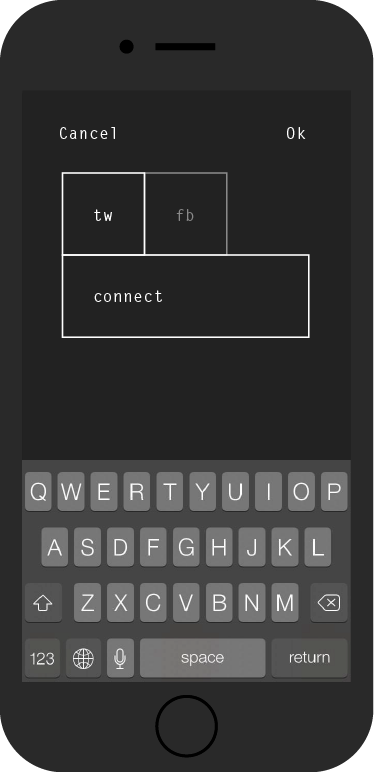

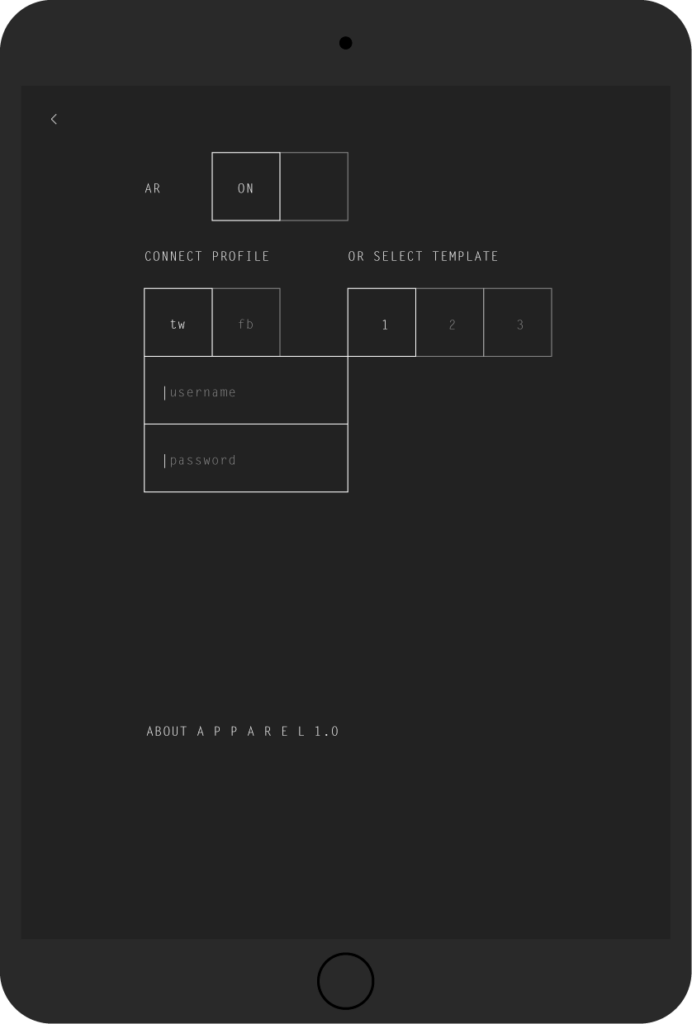

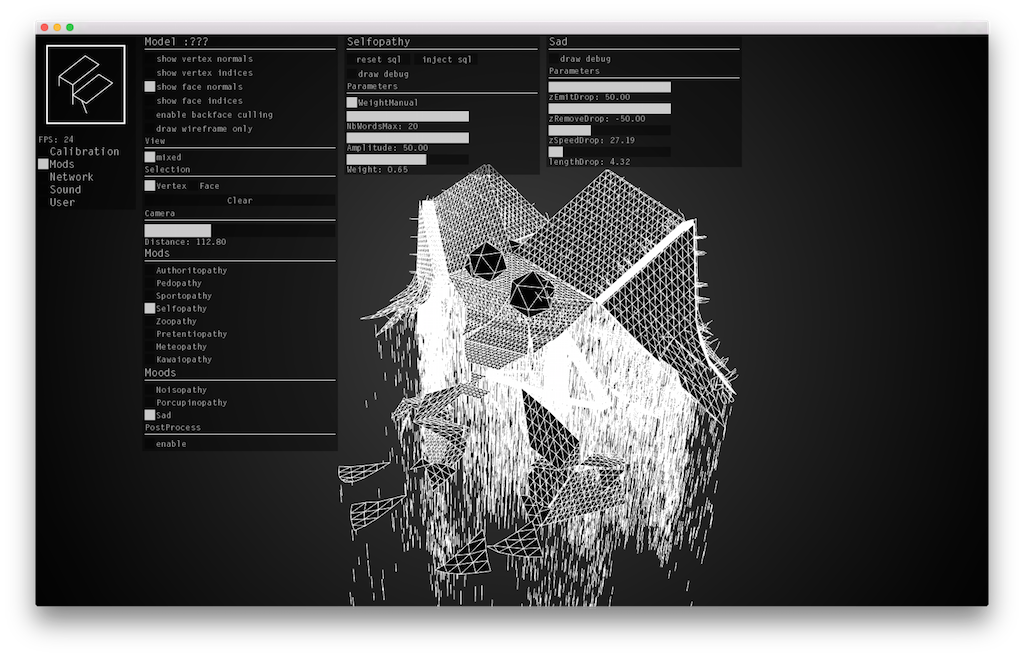

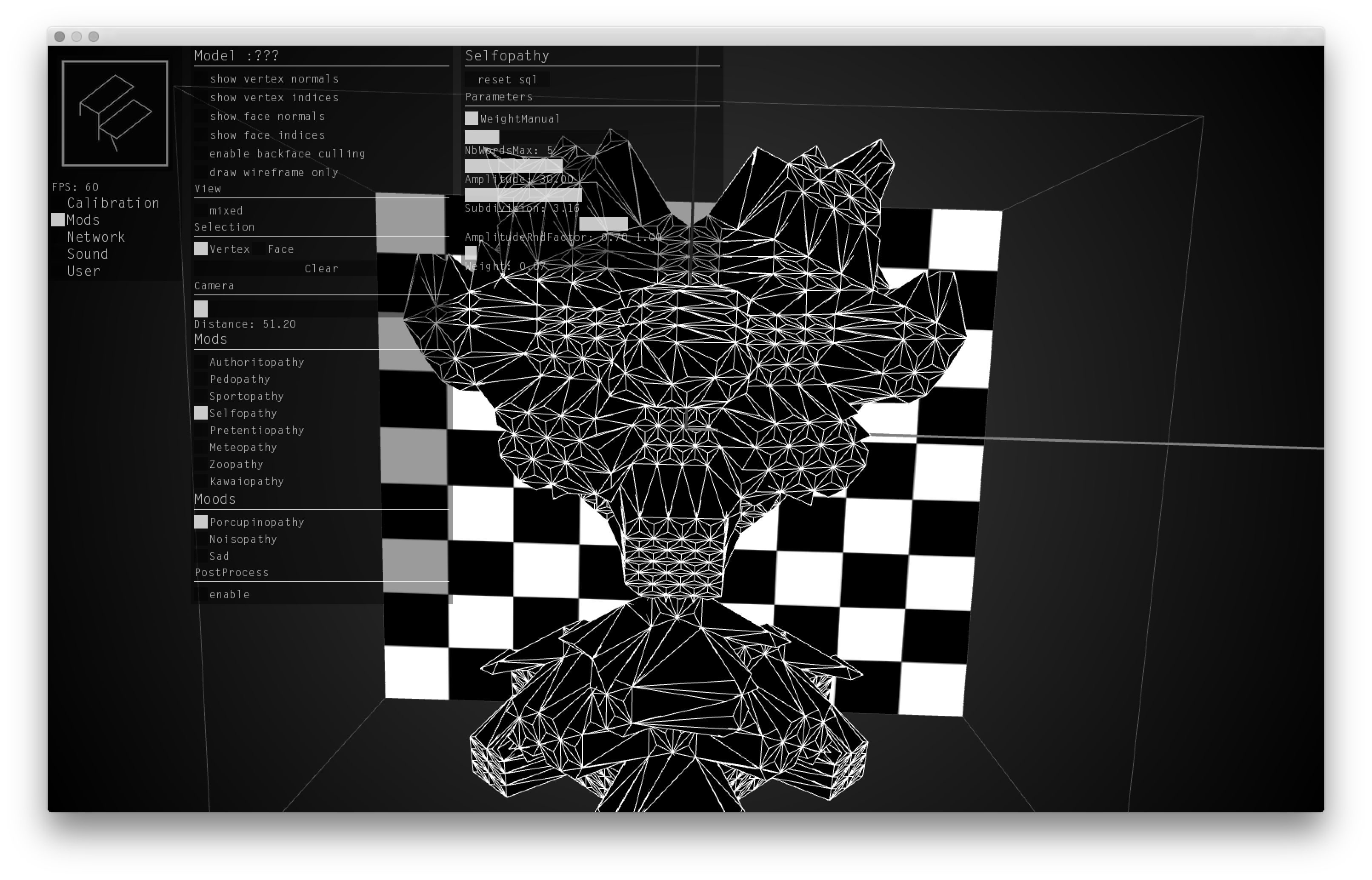

In this iteration, developed with the agile hands of Julien “V3ga” Gachadoat, A P P A R E L has now taken the form of a unisize and unisex cloak, where basic clothing needs are covered, and everything esthetic has been shifted to the realm of augmented reality. There, you get to connect your Twitter account (Facebook may follow soon) which will be scanned by an algorithm for various sorts of semantic data. This data pool is then transmitted to shape engines, called “MODS”, that react parametrically and modify the shape of A P P A R E L ‘s 3D polygonal layer according to your word usage. Added to that are MOODS; just as parametric, but instead of slowly following your online activities, these variables are activated on demand to illustrate your… mood, yes. In essence, this is like wearing a personal infographic.

A P P A R E L is made of two applications. There’s of course the a mobile one (iOS for now, forgive us Android users), which will allow you to experience the piece in real life if you come to an exhibition, or try it on a test target, or just without AR. And there’s a desktop app for development and calibration which we encourage you to look at, should you be interested in building your own MODS for an upcoming version.

This project has benefited from the help of the French National Center of Cinematography (CNC — DICRéAM).

::::::::::::::::::::::::::::::::::::::::::::

Grab a seat by a future catwalk

Download the iOS app (free)

Watch the documentary

Read the fiction

Listen to the soundtrack

Git your hands dirty with code

Check the dedicated project page